I decided to write this post to explain everything I can about these two projects. There have been discussions and people demanding these projects should not be used by default in Fedora. As part of this, some issues were raised and it might not be clear which component might be responsible for what. I ended up constantly defending these projects in many discussions and ended up being exhausted by doing so over and over, so take this as an explainer to shed some light.

Brief introduction to QGnomePlatform and Adwaita-qt

To give you some context before I go into details, you can think about Adwaita-qt as the UI representation and QGnomePlatform as the integration between GNOME and Qt. QGnomePlatform applies your GNOME configuration and behavior to Qt apps, together with some integration bits, like dialogs or client-side decorations. Adwaita-qt is responsible for the style of the app itself, including the style of all visible parts (widgets/buttons).

QGnomePlatform

What is QGnomePlatform?

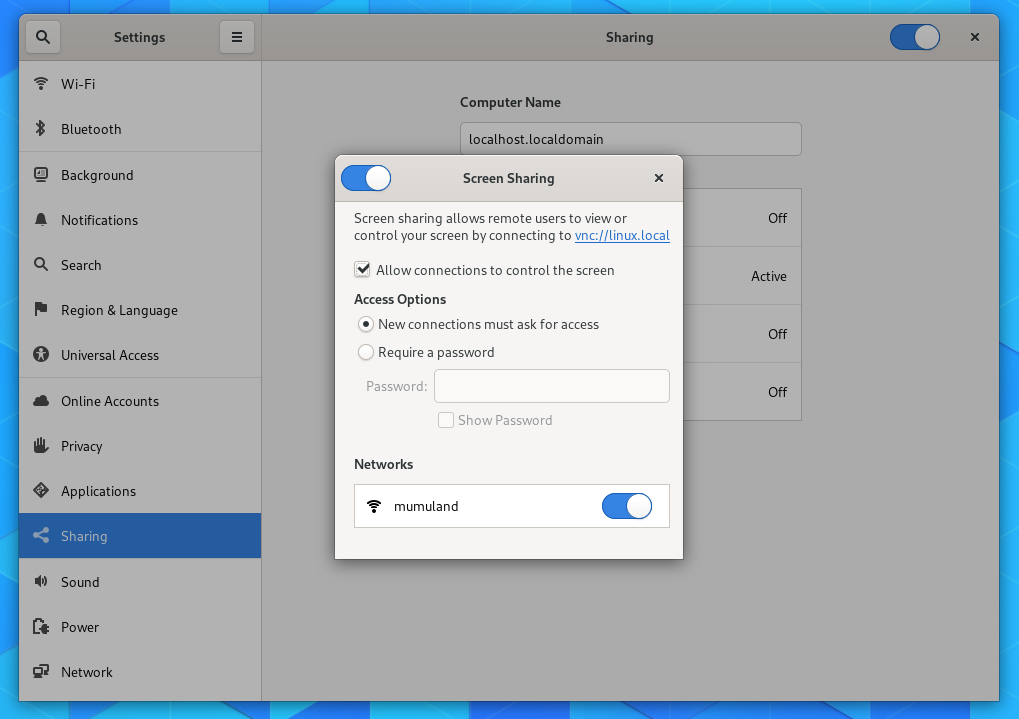

QGnomePlatform is a Qt Platform Theme (part of Qt Platform Abstraction API), where such a plugin is responsible for the app integration into the desktop environment. It is designed to provide integration between Qt apps and the GNOME platform. To explain in an example. Without any platform integration, Qt apps running on GNOME would use default styling and configuration so your fonts, icon theme, dialogs would not fit into the desktop. Also in the case of QGnomePlatform you would not have GNOME-like client-side decorations.

What QGnomePlatform provides?

QGnomePlatform provides the following integration for Qt apps running in GNOME:

- Font configuration *

- Icon theme *

- Cursor size and cursor theme *

- Static hints (like double-click time, long-press time etc.) *

- Dialogs:

- File dialog (both using GTK3 * and native dialog using xdg-desktop-portal)

- Font dialog using GTK3 *

- Color dialog using GTK3 *

- Client-side decorations

- Support for Settings portal from xdg-desktop-portal settings

- Unlike the built-in GTK3 theme that can get everything only from GSettings

- Brings support for light/dark theme switching introduced in GNOME 42

- Use Adwaita-qt theme by default (elephant in the room) and Adwaita color palette

- Also provides support for additional themes, like Kvantum

- Support for Cinnamon desktop

* these can also be provided by built-in GTK3 platform theme in Qt itself (just for comparison what QGnomePlatform does extra)

Issues QGnomePlatform gets wrongly blamed for

Client-side decorations

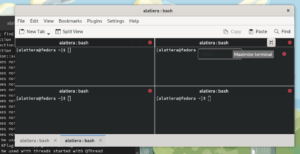

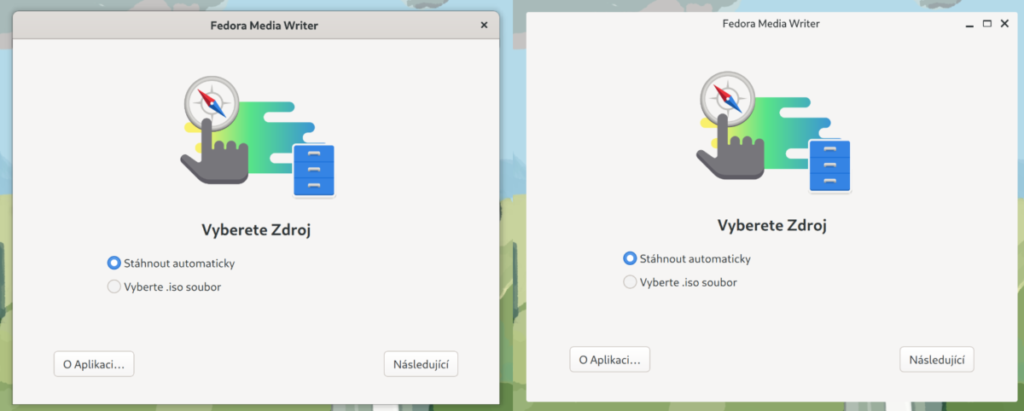

As stated above, QGnomePlatform provides an implementation of CSD. It’s actually the only Qt CSD implementation I’m aware of, excluding the reference implementation provided by QtWayland, named Bradient. Below is a screenshot comparing QGnomePlatform (left) and Bradient (right).

I saw many times people complaining about missing shadows support and resizing issues. The truth is that officially there was no proper shadows support in the Qt API until I introduced it with Qt 6.2. That’s the reason we don’t have it for Qt5, unless you are a Fedora user, where this support has been backported and enabled in QGnomePlatform build. I also fixed all kinds of CSD related issues in QtWayland.ven though Qt has proper support for shadows in Qt6 now, the reference implementation doesn’t use them.

Misplaced popups/menus

This has nothing to do with Qt platform theme or CSD implementation, because it was actually a bug in QtWayland. Unfortunately this fix is only in Qt 6 and cannot be backported officially to Qt 5 as it would break KDE Plasma. I managed to at least patch QtWayland in Fedora and Flatpak KDE runtime, where I modified this patch to not affect KDE Plasma at all.

What can QGnomePlatform be blamed for?

Forcing Adwaita-qt color palette

QGnomePlatform sets Adwaita-qt color palette to each Qt app so applications can use QPalette API to get access to colors used in the style itself. This can be for example useful when an app creates custom widgets that would not get styled by the QStyle itself. This creates a problem for KDE applications using the KColorScheme API.

Examples of this issue:

The reason is that KColorScheme and QPalette are out of sync and there might be color roles that are in KColorScheme, but not in QPalette. If an app requests a color from KColorScheme that’s not in QPalette, KColorScheme will default to Breeze style and a color that’s not going to fit the Adwaita-qt style will be provided, causing the app to mix light and dark colors.

Luckily, we have identified a workaround that can be done in QGnomePlatform to avoid this issue. Here is the QGnomePlatform bug with more details.

I think this is the most visible and user-facing issue we currently have and get blamed for so I would like to fix this as soon as possible.

Adwaita-qt

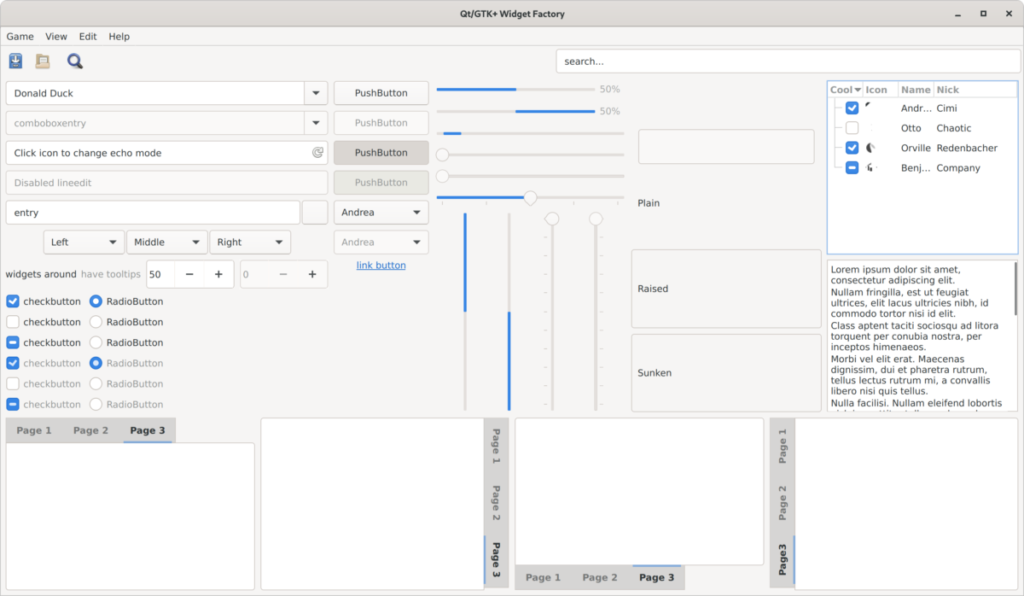

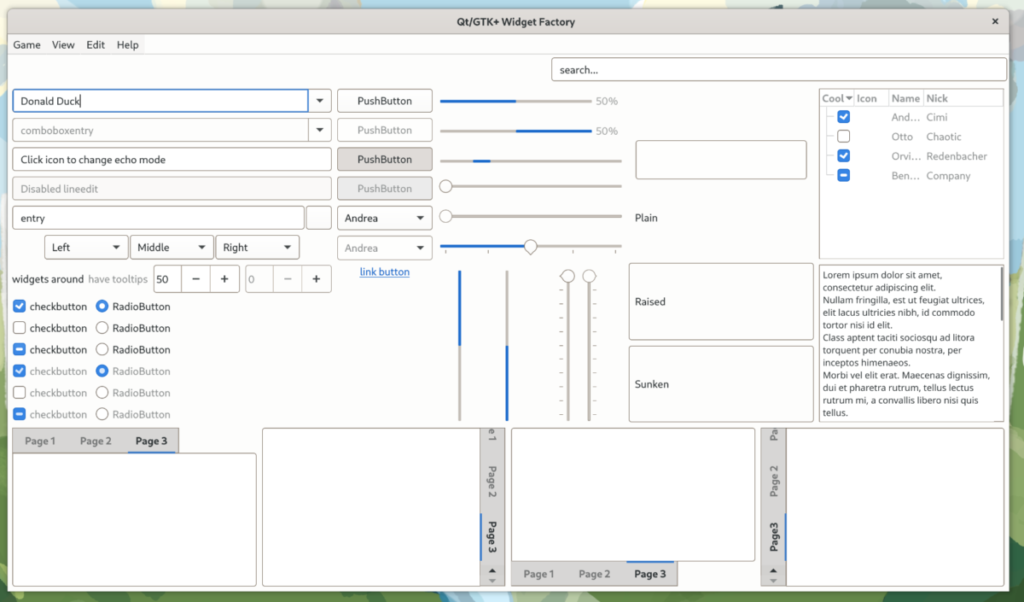

Adwaita-qt is a Qt style for widgets. Qt style is again part of Qt Platform Abstraction. You can think of it as a theme for your application. It’s what changes the visualization of your widgets like buttons, checkboxes etc. and it’s the only thing that changes the appearance of the application itself. For comparison, the screenshot below is Adwaita-qt (light) and the second one is the Qt’s default Fusion style used on Linux used without any QGnomePlatform influence so basically what you would get by default.

I can also add that Adwaita-qt supports HighContrast variants, which are useful for visually impaired people.

What issues can Adwaita-qt be blamed for?

I already mentioned the color mismatch issue which is not really Adwaita-qt’s fault. There are of course issues in Adwaita-qt itself and it’s far from being perfect. The whole style needs a complete overhaul, because the last one was done in 2019 and the Adwaita theme changed a lot recently with GTK4. Another issue is that while the majority of common widgets are styled just fine, there are still some widgets that are rarely used and might have issues with this style.

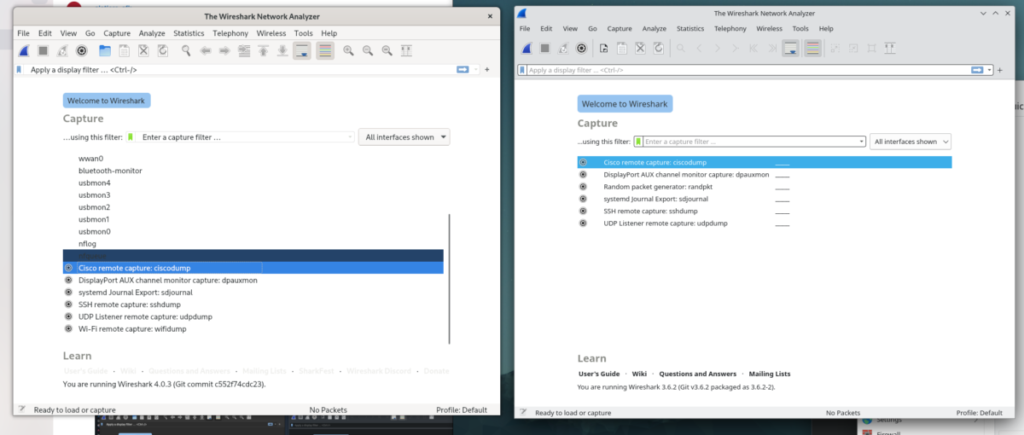

Another issue is that apps that customize standard widgets (e.g. through CSS), might get into trouble. Below is a screenshot of Wireshark (pure Qt app) compared to Breeze in KDE.

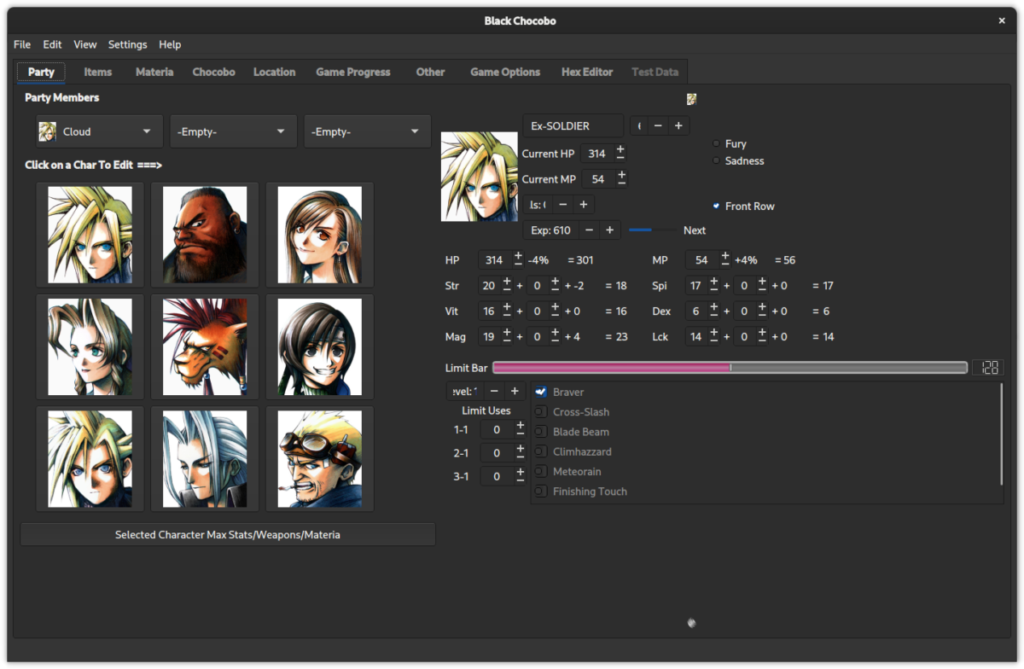

Another example can be seen in the Black Chocobo app, which uses some customization:

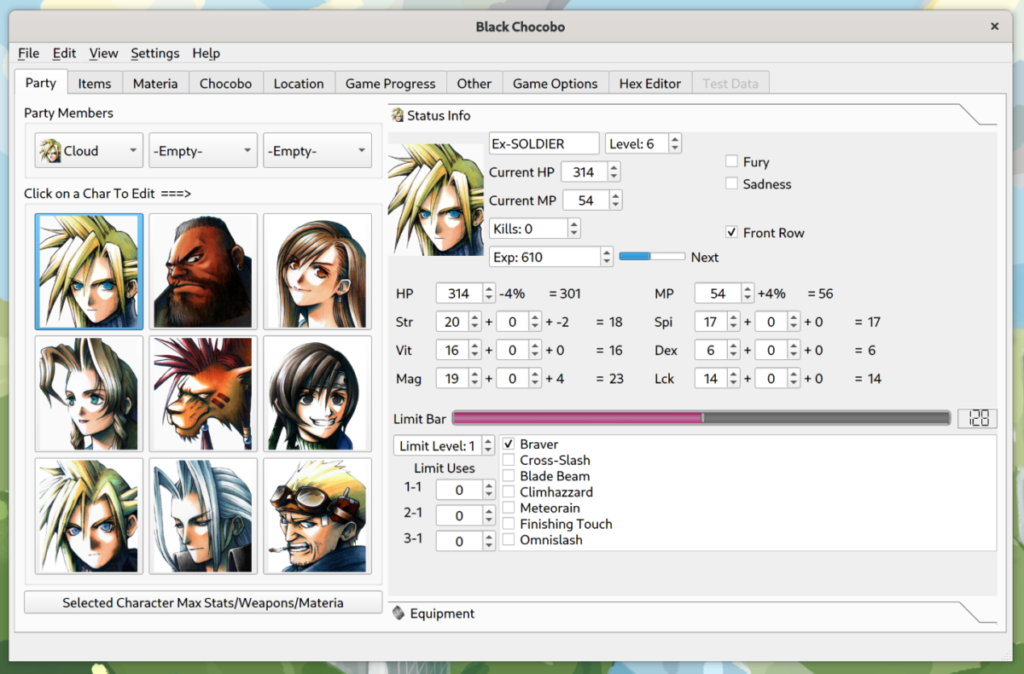

Below is Black Chocobo using Fusion style.

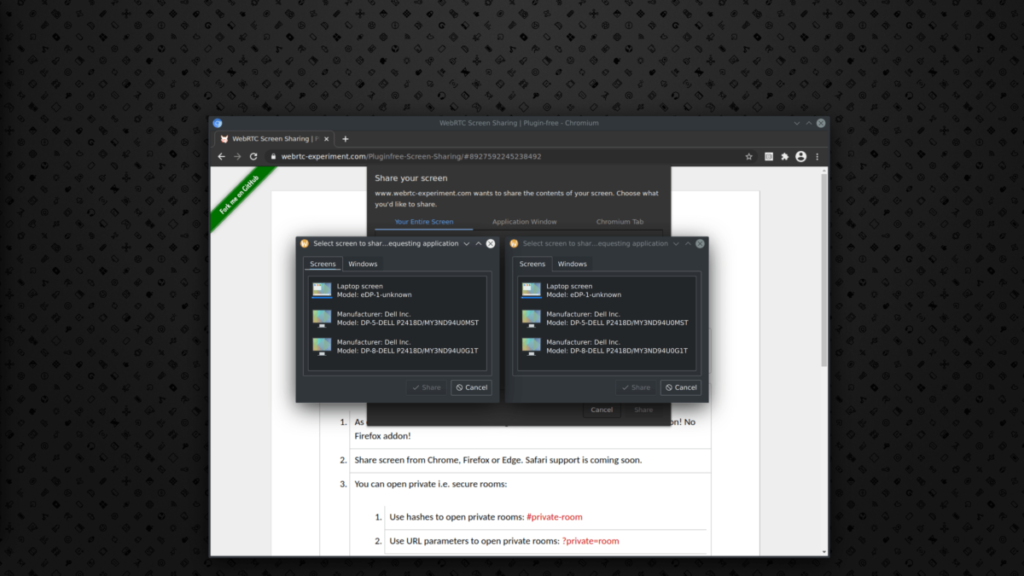

Should QGnomePlatform get removed/replaced?

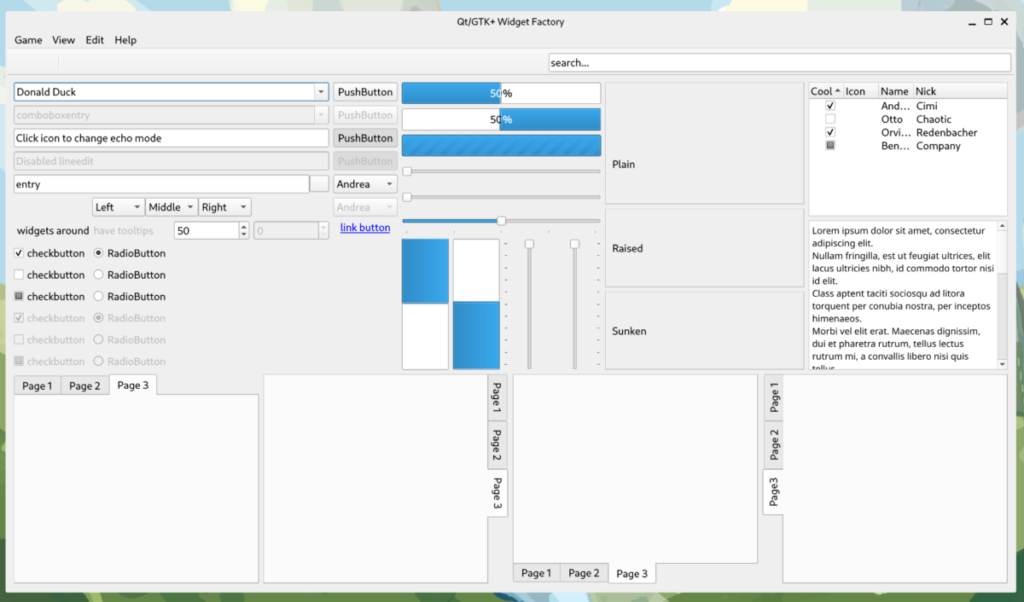

Definitely not. It has many benefits and extras compared to Qt’s default platform and most importantly gives you CSD support. Once I fix the color mismatch issue, there shouldn’t be anything users should complain about. Obviously, this would not be an issue when Breeze is used instead of Adwaita-qt, but still an issue when Fusion is used so it would still need to be addressed. You can see a screenshot below showing Fusion style used in combination with GNOME set to dark theme:

Should Adwaita-qt get removed/replaced?

Maybe. It depends on the alternatives. Obviously using Breeze would get rid of all the widget issues one might experience with Adwaita-qt, however, it is problematic to ship it by default due to bringing dependencies on KDE Frameworks and Plasma breeze style. With the default Fusion style you will also get many widget issues fixed, but you still need to set the color palette through QGnomePlatform in case you want Fusion to be “dark” and fit into the desktop, otherwise you will always end up using the default “light” variant no matter what configuration you set in GNOME.

Conclusion

I hope that this post is useful for those observing issues with Qt apps under GNOME, and will help them to understand which component is responsible for what, as well as the issues involved. In case you are interested and would like to either contribute a patch or report an issue, here are links to QGnomePlatform and Adwaita-qt repositories.